Your AI Agents Have Amnesia: Why Memory Graphs are ALSO Critical!

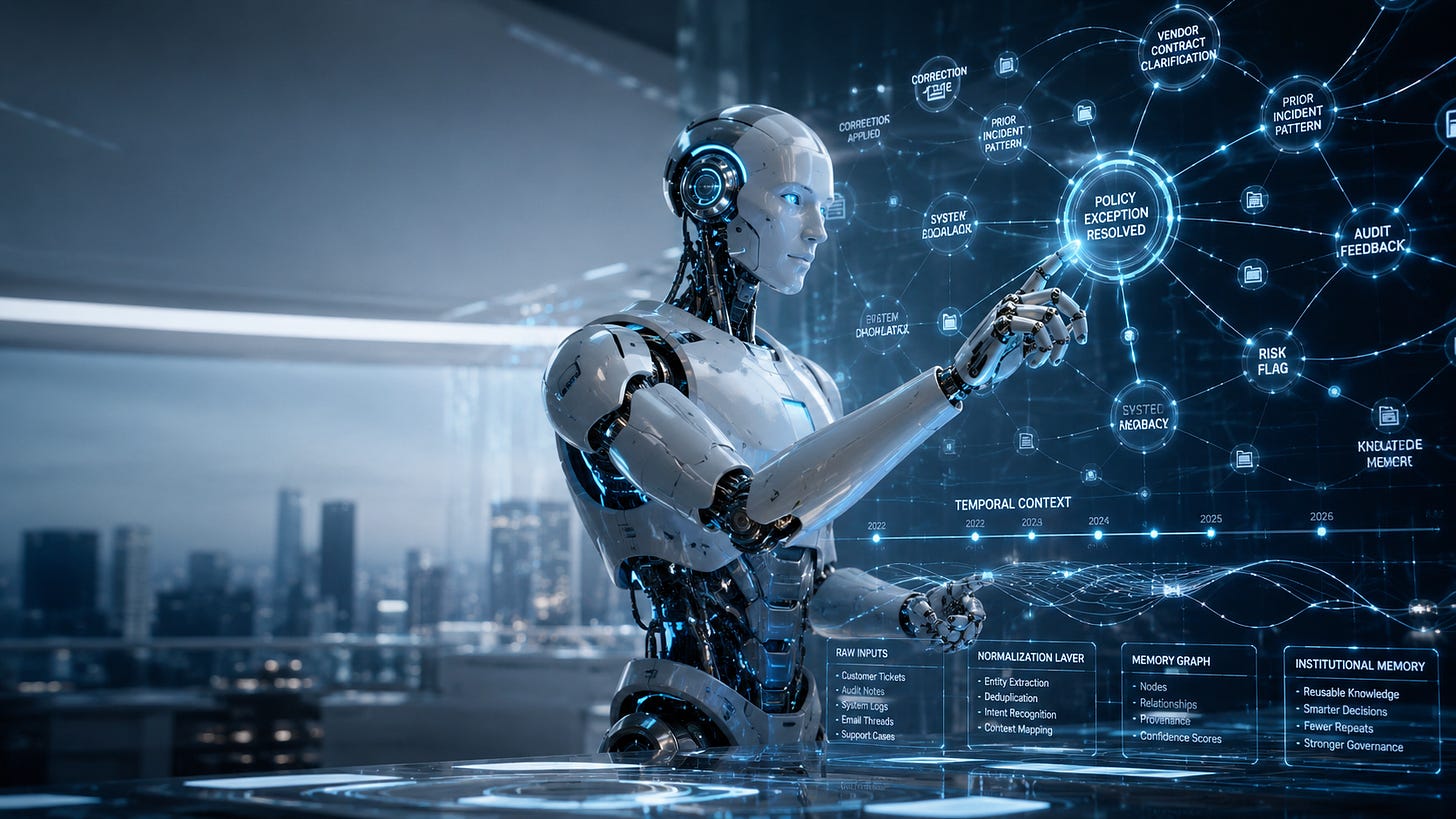

Memory Graphs turn corrections, exceptions, and business changes into reusable enterprise intelligence.

📌 THE POINT IS: Knowledge Graphs tell AI agents what is true. Context Graphs help them understand how decisions get made. Memory Graphs complete the system by turning human feedback, exceptions, and changing business reality into governed institutional learning. Without that layer, every correction is temporary, every exception is trapped in the moment, and every agent has amnesia.

Most companies still think about AI memory like it is a bigger chat history. That is the wrong mental model.

A long context window can help an agent remember what happened earlier in a conversation. That is useful, but it is not enterprise memory. It does not automatically know which lessons should apply again, which were one-time exceptions, which require approval, and which are outdated.

If AI agents are going to become digital workers, they need a way to learn without turning every correction, preference, or exception into an uncontrolled operating rule.

“Without memory, AI agents forget user preferences, repeat questions, and contradict previously established facts.”

– Mem0, Building Production-Ready AI Agents with Scalable Long-Term Memory

Knowledge tells the agent what is true. Memory tells it what changed.

In the first article in this series, I wrote about Knowledge Graphs as the deterministic control plane for agentic AI. They help agents produce answers that are correct, consistent, and explainable.

In the second article, I wrote about Context Graphs as the layer that captures institutional judgment, especially when the answer is not cleanly written in a process document.

The Memory Graph is the third piece. It captures what the organization has learned over time: human corrections, customer outcomes, policy changes, approved exceptions, escalation patterns, and decisions that were valid yesterday but are no longer valid today.

That last point matters. Memory without time is dangerous.

“Traditional knowledge graphs treat facts as static, but real-world information evolves constantly.”

– OpenAI Cookbook, Temporal Agents with Knowledge Graphs

If an agent remembers that a customer exception was allowed last quarter, but does not know the policy changed this quarter, it is not smarter. It is confidently outdated.

A Memory Graph is not just a database

A Memory Graph is not one tool. It’s an architecture pattern.

In practice, it may include a graph database to represent entities and relationships, a vector store for semantic retrieval, an event log for auditability, a rules engine for deterministic policy enforcement, and a governance layer for approval, retention, access, and invalidation.

A rules engine tells the agent what it must do. A Memory Graph tells the agent what the organization has learned. Those are related, but they are not the same thing.

“Customers in this segment usually ask for an escalation” is memory.

“Customers in this segment must receive this disclosure” is a rule.

Blend those together carelessly and your agents will start treating patterns like policy. That is how risk gets scaled.

Personal memory and enterprise memory need different governance

Personal memory improves user experience. Enterprise memory improves organizational performance. Those two memories cannot live under the same governance model.

A personal memory might be, “Matt prefers concise answers.” Helpful. Low risk. Easy to delete. An enterprise memory might be, “This claim scenario requires escalation when these three conditions are present.” That needs lineage, approval, access controls, retention rules, and a clear invalidation process.

LangChain’s memory framework separates memory into facts, experiences, and learned behaviors. Those distinctions matter because different kinds of memory require different controls.

The most valuable enterprise memory will not come from generic chat logs. It will come from human correction. When a customer service agent gives the wrong answer, or when a manager explains why an exception was allowed, that learning should not die inside the transaction.

5 things you can do now to prepare for Memory Graphs

Choose the memory architecture before you choose the agent.

Do not let every team bolt memory onto agents in a different way. Define the enterprise pattern first: graph storage, vector retrieval, metadata, audit logs, and business-rule integration.

Separate memory from rules.

Rules define what must happen. Memory captures what has been learned. If your agents cannot tell the difference between a pattern and a policy, they are not ready for autonomy.

Create separate lanes for enterprise memory and personal preference.

Personal memory should be scoped to the user, transparent, and easy to delete. Enterprise memory needs lineage, approval, access, retention rules, and invalidation.

Start with human corrections.

Pick one workflow where humans already correct AI outputs. Capture the correction, reason, source, outcome, and whether the lesson applies once, sometimes, or always.

Design forgetting into the system from day one.

Memory without expiration becomes operational debt. Policies change. Regulations change. Strategy changes. A Memory Graph must retire, supersede, or challenge old memories before they become scaled risk.

This is where your digital workforce starts learning safely

Knowledge Graphs help agents know what is true. Context Graphs help agents understand how judgment gets applied. Memory Graphs complete the architecture by helping agents learn from what actually happened.

But enterprise memory cannot be a junk drawer of chat history, user preferences, and stale assumptions. It has to be governed infrastructure.

When designed well, the Memory Graph turns human feedback into institutional learning. Policy changes travel faster. Agent behavior gets more consistent. The digital workforce gets a safer way to improve over time.

That is the real unlock: agents that learn and scale under control.